30 Days of Maps Day 20 - OpenStreetMap

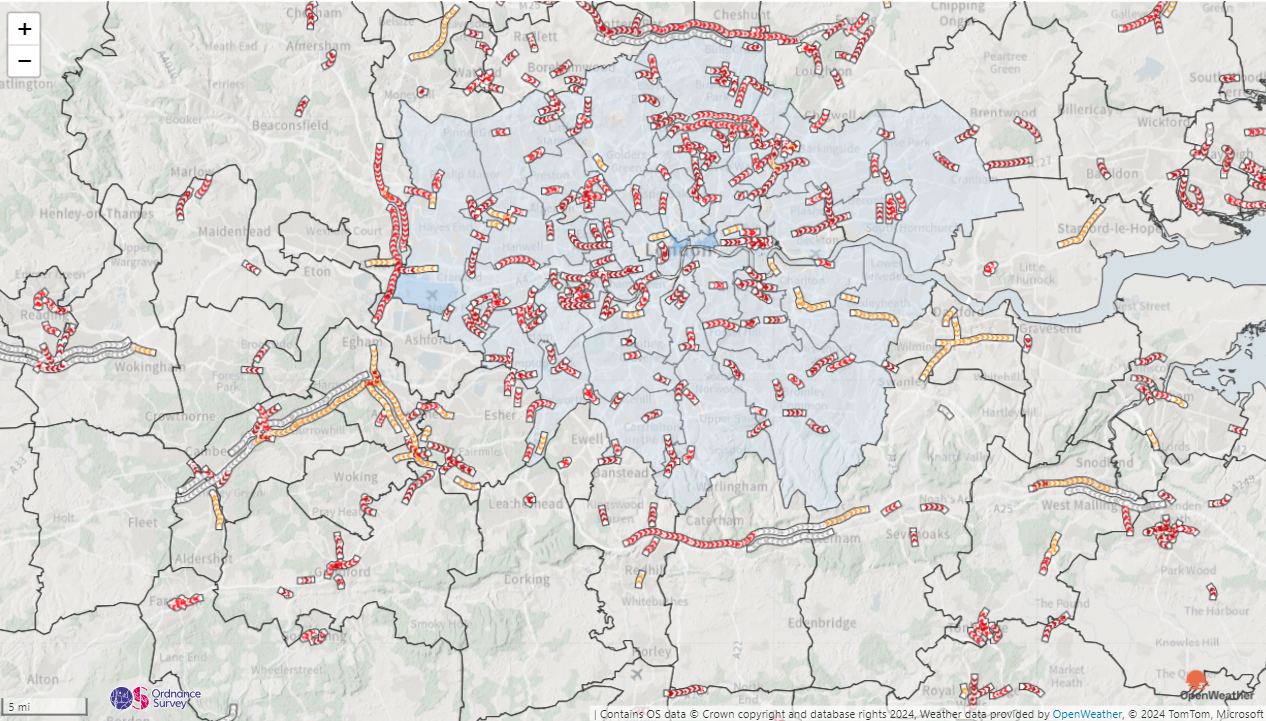

It's day 20 of the #30DayMapChallenge and today's theme is OpenStreetMap - Use OpenStreetMap data to create something. OpenStreetMap offers rich, editable data from roads to buildings and beyond. The goal is to showcase the power of this community-driven dataset.

OpenStreetMap is a great source of geospatial information - in fact it's the data source from which we build our base maps. For today's challenge I've extracted the railway network for Europe. I've used the relations information to determine the routes for many of Europe's train operators. There's far too much data to load into a shape file, and too many operators to split into multiple files, so I've loaded the data as WKT into the Power BI dataset. Each section of track and station is a row of data - there are over 3 million of them.

I've added some Power BI conditional formatting, configured by slicers, to allow the track width to be represent the maximum speed of that section of track, and for the colour to represent a route by that operator. Selecting a specific route, will also enable labels to show the station names.

The stations I've mocked up a performance KPI, so stations are shown in traffic light colours according to their status. I've also added some random alerts, so you may see some warning triangles at various locations.

As OpenStreetMap data is crowd-sourced, the data quality isn't always consistent, so I've filtered the report to selected of train operators that have largely complete data.

If you'd like to see how the report was built, you can download it here.